uncertainty

The reality we can put into words is never reality itself.

Perhaps—

If we looked too closely,

we could understand the void.

But what if the very act of looking changes the void itself?

The Uncertainty Principle in Quantum Mechanics states that we cannot simultaneously know both the position and momentum of a particle with perfect accuracy. This is expressed mathematically as

where is the uncertainty in position, is the uncertainty in momentum, and is the the reduced Planck constant1.

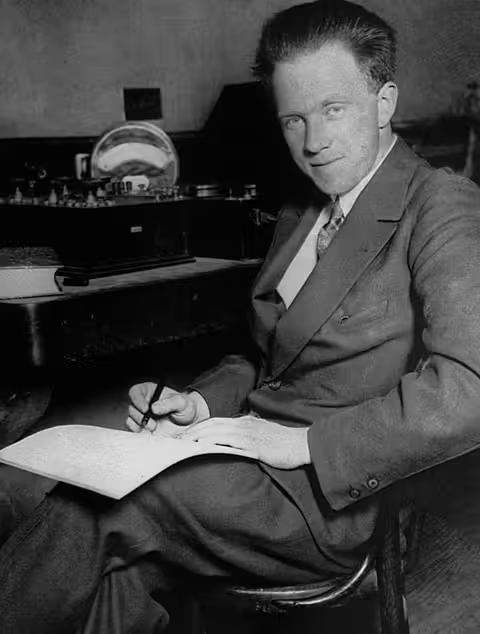

Werner Heisenberg, 1925

My team at work has recently been profiling our services with a view to optimize performance, and I noticed an interesting analog to the Uncertainty Principle in software development: you cannot accurately measure both the precise point a particular operation is in the sequence of operations in a program's pipeline sequence and the precise duration of that operation at the same time; it seems there's always going to be a trade-off between the two.

This is because measuring time (say, a call to performance.now())

has side-effects that affect other running processes, including the

very operation being measured.

The more precise or granular the measurement,

the more these side-effects affect the operation itself and

its surrounding opearations.

On the other hand, if you measure at the very coarse level

then you're measuring too much extraneous stuff

and the data becomes less useful.

coarse approach

For example: in a large system, measuring the performance from a

relatively high-level

function such as main

will yield very coarse data that is not very actionable

and does not measure the precise operation we care about.

fn main() {

let start = performance.now();

start_work(); // many unknown operations happening here

let end = performance.now();

}

How does one interpret measurements here? If the aim is to measure topline metrics such as request latency, this is useful. But the moment one wants to optimize the system, the measurement data gathered here gives very little insight into the performance of individual operations, making it hard to identify bottlenecks.

granular approach

Alternatively, one could try to measure more granular operations:

and see how it goes?

fn process_requested_image(handle: &InType): OutType {

let start = performance.now();

// implementation...

let end = performance.now();

}

Or, make it even more granular:

fn open_image_file(path: &str): ImageFile {

let start = performance.now();

// implementation...

let end = performance.now();

}

fn decode_image_data(file: &ImageFile): ImageData {

let start = performance.now();

// implementation...

let end = performance.now();

}

fn resize_image(data: &ImageData, size: Size): ResizedImage {

let start = performance.now();

// implementation...

let end = performance.now();

}

fn process_requested_image(handle: &InType): OutType {

let file = open_image_file(handle.path);

let data = decode_image_data(&file);

let resized = resize_image(&data, handle.size);

// ...

}

From a profiling perspective, this is much more useful data.

But more granular measurement results in more profiling calls

being made, which in turn has more side-effects on the system.

In this case, performance.now() significantly

changes the behavior of process_requested_image itself.

If this is a utility function called frequently across the system,

then these low-level

side effects accumulate exponentially

with the size of the system.

what now?

This is also called the Probe/Observer Effect. The act of measuring changes the thing being measured. It's important to be aware of this trade-off when profiling systems, and to choose the right level of measurement. And perhaps, in a philosophical sense, we should accept that some aspects of reality are inherently uncertain and unknowable, and that our attempts to measure and understand them will always intervene.

We can never know anything.

Footnotes

- The reduced Planck constant is defined as

,

where is the Planck constant. ↩